“I just want to be part of great stories that are told and for them to be relevant.” – Zoe Saldana

Before diving into this lengthy post, I want to attempt to sum up with a 10,000 foot view of my thoughts on how link building is changing in light of the Penguin update.

I also want to make clear that this is an opinion-based piece and is designed to promote debate – and motivate people smarter than me to develop this theory, or crush it.

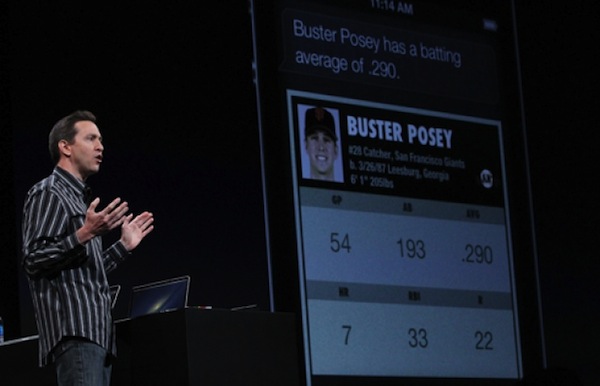

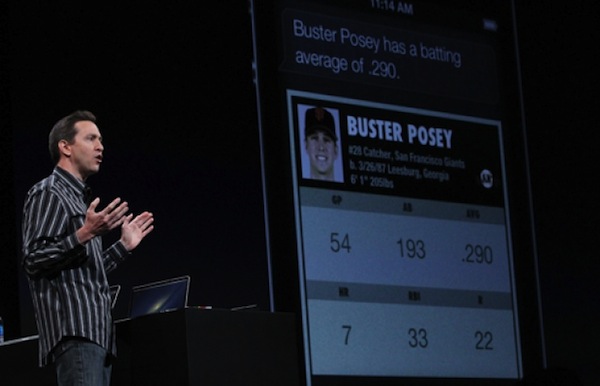

And the theory and core proposition of this post is this: Google is afraid. Afraid that its crown as king of search will be stolen by a new breed of voice-led search engines led by a Wolfram|Alpha-powered Siri.

It’s afraid because Siri’s partnership with the currently small but semantic-from-the-ground-up Wolfram|Alpha gives them the reach they both need to change the game. And as Wolfram is better at semantic search – the nirvana for search engineers – it is a justifiable fear.

Google’s problem in competing in this space is the existing link graph. At present it’s muddied with millions of artificial links, created by people to game their old document retrieval algorithm.

Penguin is Google’s attempt to begin clearing the waters again and promote links that help it understand those relationships. As a result relevance is now the key to link building and value adding content the currency of it all.

It’s a bold statement but below I attempt to explain that reasoning in a little more detail.

Link Building and the Semantic Web

This post came to me a few days ago as I sat in a pub at an informal SEO meet up.

At the next table two guys were discussing why Google is a bit like Madonna. A random conversation if ever there was one, but from it began spawning an idea.

Their argument was that they considered Madonna to be constantly chasing relevancy. The problem, as far as they were concerned, was that, “she was only relevant when she was good in the ’80s.”

This, it was argued, is the “trap” Google is in danger of falling into. Like a plastic surgery-obsessed star chasing perfection but actually achieving the opposite the search engine is scared of “getting old and irrelevant.”

This has been brought into stark contrast by the launch of Siri – a search concierge powered by the aforementioned Wolfram|Alpha search computational knowledge engine.

Penguin is Google’s attempt to begin clearing up its “old world” search operation and begin to move it toward a semantic future. A place where relevance is king.

There is therefore a paradigm shift taking place in the way Google works as it attempts to move from its existing method of organizing information based on a document retrieval process to one based on semantics and understanding user intent.

In many ways we’re on the cusp of an entirely new world where, rather than managing information, Google manages knowledge (see their recent announcements on the Knowledge Graph as an example of this in action). Instead of matching keywords to documents it wants to match them to concepts.

As a result the days of link building to valueless and irrelevant sites such as directories and networks are over. In its place is a boost for hard earned links from super relevant sites. And as I sift through more and more data I am seeing a correlation between rank-enhancing links and their relevance to the subject document.

To truly answer the question about what these changes really mean however we must dive into more detail around how search engines organize data and why relevance is going to be key moving forwards.

Semantic Data

Search experts have been talking up the “semantic web” for years and no doubt you will have read about how it will “transform the landscape.” For those that have not yet had had the pleasure, let’s explain the basics of what it really means.

While semantic web has many facets, intrinsically it is about organizing data in a way that helps understand the user intent behind a search query. It makes that process easier by mapping things like the relationship between words and phrases to “entities” (people, places, etc). The word semantics literally means “the study of meaning.”

The move to a ranking system based on semantics was never going to be easy. It means dumping, or at least placing less reliance on, the PageRank model that made Google the business it is today.

Apple’s Siri has a bit of a head start when it comes to creating usable interfaces and platforms that place semantic data at its heart because it does not have a muddied and artificial link graph to contend with.

In short, the data Apple is using to power their search hasn’t been “gamed” and that makes it easier for them to get ahead. They have even created this awesome data visualization project mapping how computable knowledge (as they call it) has evolved throughout the ages. Google is (ominously) but a blip in the timeline.

Google, however, has a hatful of relevant patents that will ensure it has a very big say in how this all plays out.

What is Relevancy in a Search Context?

We all think we understand what being “relevant” really means in a search context, but if we are to understand where Google may be going with it and how it measures relevance we must first dig into some more detail.

First, relevance is defined in a handful of different ways by search engines, generally speaking in the following ways:

- Topical Relevance: This looks at what a page is “about” and whether it is related to the search phrase.

- Cognitive Relevance: Where the search engine looks at the quality of information.

- Situational Relevance: Search engines attempt to understand the motivations behind a search by looking at how the information it retrieves may help or be useful in the decision-making process.

- Motivational Relevance: This is the relation between the intents, goals, and motivations of a user and what they may need from the search returns.

Already you can see how much goes into the average search in those milliseconds before results are returned.

Of course Google doesn’t just look at a document to work out how relevant it is. It takes in hundreds of external relevance signals and the most prominent of those are link-based.

Link Relevance

When looking at the relevance of links it uses several interesting relevancy scores in giving any link a weighting; and this is where things are changing.

Personalization is making links for some more important than they are for others and below we look at a handful of the main “culprits” for the changing way in which relevance works:

- Personalized Anchor Text Score: A way of measuring the relevance of the document to the user, which gives the anchor text a personalized score which is then combined with the normal document information retrieval score to generate a personalized ranking for the document (it’s the basis of personalized rankings).

- Topic Sensitive PageRank: Is more of a link quality-based algorithm used by Google to scale its ability to personalize search rankings. In other words, they are biasing the PageRank based on what the user is looking for. It makes relevant inbound links very important as having them makes it clear what you’re relevant for.

- Reasonable Surfer Model: Considers the navigational use of a link and places more or less importance on a link based on how it is, or might, be clicked on to navigate to further information. This is where contextual content links can earn extra “power.” It also suggests that a link from a H1 might carry more weight as it may be clicked on more.

- Hilltop Model: A really interesting one as it is relatively old but offers Google a way of re-engineering link weight. Basically it assigns “expert” status to one or more sites or pages around a specific topic and then any page or site that receives a link from that expert source will have a much better chance of ranking for that term. This could be playing a much larger part post-Penguin as Google looks to clear the waters again in link terms and understand what’s important from a “brand” perspective.

So what does this tell us? It certainly goes some way to explain how many ways Google is able to alter its algorithms to affect link building and the relative weight or value of inbound links.

It also explains how links pass differing amounts of “juice” based on who is searching or how or what they are searching for.

There is a good reason why it is this particular area that Google is playing with as part of Penguin too and that motivator is its fast moving competitive space.

Apple’s virtual assistant presents a very real threat to Google’s dominance and most of their fear emanates from the fact that its “engine” is already better at semantic search. That means Wolfram’s data is better structured to allow it to understand user intent and piece together related topics and deliver more relevant results.

Take a search for the temperature, for instance. The end game for search is to be able to “know” where you’re searching for (so you don’t have to type in the place) and then not only deliver that but also results based on what your next searches may be based on a combination of personal data and big data trends.

Understanding the relationship between 40 degrees and the fact that you might be looking for BBQ products or parasols, as an example, is extremely valuable.

Why Does this Affect Marketers?

The problem for Google is that the link graph created by artificial link building practices has “muddied” the waters and so Google is now looking to the link graph to not only help it understand how to rank content via the document retrieval model of old, but also to power its relevance-based semantic model of the future.

This is why relevant links are so valuable. They help the engines better understand relationships in the real sense of the word; how one piece of content is improved by another on a related theme. Search patents are increasingly being used to measure relevance and connections.

Marketers should begin to think about how their business, or client’s business can contribute to the Knowledge Graph and ensure that the sites they manage take advantage of the various opportunities now being presented by Schema.

Content Marketing

It doesn’t take a rocket scientist either to work out why a content-led approach helps Google improve search; providing further information for its semantic engine and also because great content links to other great content.

The Hilltop model will be important here, helping Google power it’s “brand-led” push; handing “expert” status to “brands” and enabling them to rank better then the sites of old that created traffic, visibility and sales based simply on spammy back links.

Becoming a hub of thought leadership and expertise will give out and attract the type of links Google wants and needs – and you’ll be rewarded for that effort.

Takeaways

Although this post is intrinsically an opinion piece, it will hopefully provide some understanding of why so many are writing about the importance of content marketing now.

Although this post is intrinsically an opinion piece, it will hopefully provide some understanding of why so many are writing about the importance of content marketing now.

If you take anything away from the post I hope it is this:

- Google is super-motivated to clean up search and kill off non-value adding sites to prevent Siri stealing valuable ad revenues.

- The search giant is in the process of remodeling how it structures and organizes its data. Penguin is just a small part of that process, but expect it to continue so that they can compete in the semantic space.

- To win in the ‘new world’ you must contribute to the semantic web and add value. Invest in quality content for on and off-site marketing practices.

- Think carefully about what you want to be relevant for and what you link out to, as this will also affect your relevancy.

This recent video created by Google sums up where they are taking this nicely. If you want the edited highlights flip to the 11 minutes mark as their engineering director Scott Huffman explains the three main hurdles the company has to get over in order to create the search engine of the future:

Although this post is intrinsically an opinion piece, it will hopefully provide some understanding of why so many are writing about the importance of

Although this post is intrinsically an opinion piece, it will hopefully provide some understanding of why so many are writing about the importance of