This article is a simple breakdown of how to go about using an SEO site crawler to quickly identify duplicate content. There are many tools out there, but Screaming Frog is definitely one of the most popular/powerful scrapers and it is the spider of choice for this tutorial.

The first step to any site crawl is configuration. Limit the pages to be crawled in any way you see fit, as it is typically in everyone’s best interest to avoid scraping the entire Internet.

The parameters shown above were chosen for this example crawl of Costco.com. By limiting the “Search Total Limit”, Screaming Frog will crawl only the first 100 URLs it comes across.

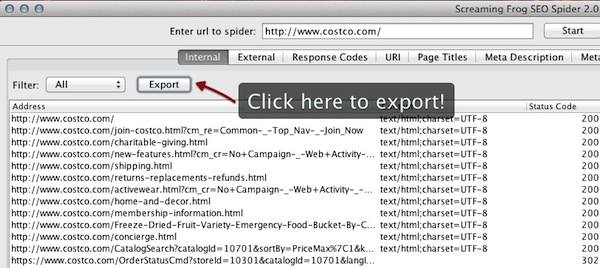

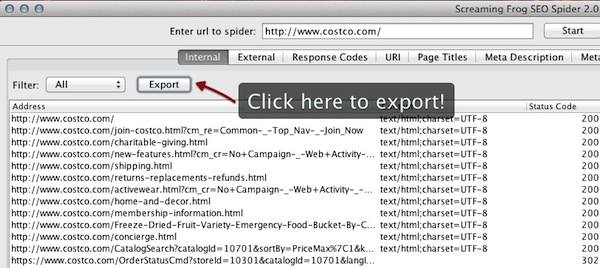

Once the parameters have been specified, type in the address of the site and click start. In most crawling tools URLs are shown as they are requested. When the progress is complete click export.

While Screaming Frog was used to procure the list of results shown below, any tool that can request, parse, and export this data can be used:

- Address

- Status Code

- Page Title

- Meta Data

- Meta Refresh

- Canonical

There is beauty in simplicity and this report is definitely simple, but effective. To the right of the Status Codes is the Page Title sorted in ascending order and with duplicate values highlighted (using conditional formatting in Excel). The columns to the right of the Page Titles, display whether those pages contain directives that search engines would follow.

- Meta Data: Will display any Meta Robots Noindex tags

- Meta Refresh: This is occasionally used to redirect users

- Canonical: Used on duplicate (or subset) pages to point to the authoritative or ranking URL

Continuing on with the methodology of identifying duplicate content, by scanning the Page Titles column and looking for duplicates that are highlighted in pink, we find what looks to be duplicate Customer Service pages in the image shown above.

http://www.costco.com/customer-service.html

http://www.costco.com/customer-service.html?cm_re=Common-_-Top_Nav-_-Customer_Service

Glancing to the right it’s obvious that Meta Data and Meta Refresh are not being used, but both contain a canonical to:

http://www.costco.com/customer-service.html

This is great news! It means they’re using self-referential canonicals to handle at least some of their duplication.

Now scrolling through the remainder of this data we know that there could be many instances of this same thing happening so it might be easier to look for instances where there are duplicate Page Titles, but the canonical is blank. For large data sets it might be nice to use filters to accomplish this, but since this is just a sample crawl you can see below that it’s pretty obvious.

Wait a minute, what’s that there Costco? Looks like they forgot to use their canonical strategy for the home page!

http://www.costco.com/

http://www.costco.com/?cm_re=Common-_-Top_Nav-_-Home

http://www.costco.com/TopCategories?langId=-1&storeId=10301&catalogId=10701

Throwing those duplicate pages into Open Site Explorer and Majestic SEO revealed no backlinks, but since those pages are internally linked and navigable, there is definitely opportunity for users to link to them and the possibility for split link equity. Best practices would suggest they throw a self-referential canonical on the home page to be sure any indexing properties of the URLs containing tracking parameters are consolidated to their rightful place, the ranking page.

Site crawlers should be used with care! It is possible to bring down a site if it is crawled at too fast of a pace. That being said, they are key in identifying issues on a site relevant to SEO, as well as understanding the scale of which a particular problem is happening.