Avoid Blocking CSS and JavaScript for Suboptimal Rankings

Following its announcement about crawling JavaScript and CSS files, Google released a free tool that can test and solve SEO robots.txt related problems and is compatible with Bing.

Following its announcement about crawling JavaScript and CSS files, Google released a free tool that can test and solve SEO robots.txt related problems and is compatible with Bing.

This article gives a breakdown of the free tool recently made available, Fetch as Googlebot, which has everything you need in a basic robots.txt tester for both Bing and Google. Here’s how you can use this tool to fulfill your SEO robots.txt needs.

First, here is a little background on the tool’s release. In cadence to making it crystal clear that they had crawling and understanding JavaScript down to a science, Google declared to the SEO world that “Disallowing crawling of JavaScript or CSS files in your site’s robots.txt directly harms how well our algorithms render and index your content and can result in suboptimal rankings.”

Following this announcement, Google fell into its expected pattern of behavior and armed webmasters with additional functionality in the free tool, Fetch as Googlebot, to understand if this is happening to their site. Additionally, the search giant offered documentation to help understand how to fix it.

While this is a great tool, it isn’t a comprehensive solution for every SEO robots.txt issue. Here is why:

To simply navigate to the robots.txt testing tool by:

Scrolling through the XML Sitemap Declarations and User Agent Disallow checking sections, you will see:

Scrolling through the XML Sitemap Declarations and User Agent Disallow checking sections, you will see:

Below you can see kuhl.com has a JavaScript resource that is disallowed by the robots.txt on static.criteo.net.

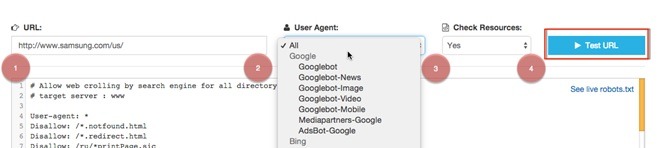

There’s often a need to check if a URL is blocked by directives in the robots.txt. This tool takes it a step further by checking the URL in question against any User Agent specified in the robots.txt.

For example, here we can see that samsung.com is blocking Yandex from crawling their U.S. homepage.

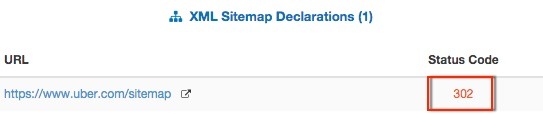

Additionally, any XML Sitemap Declarations in the robots.txt will be checked to make sure they’re accessible by search engines.

Additionally, any XML Sitemap Declarations in the robots.txt will be checked to make sure they’re accessible by search engines.

When searching for a robots.txt tester, most results are in desperate need of an update and a redesign.

Big thanks to Max Prin for finally developing a good tool for SEO’s that isn’t associated with or exclusively for Google.