I started my career in web development and web application development, and I’m glad I did. I use my programming skills on a regular basis in SEO, and especially when performing SEO audits.

Various helpful SEO tools can be incredibly helpful in assisting your analysis, but nothing trumps human SEO intelligence. The right tools combined with the right SEO intelligence can yield incredible results.

No matter how many SEO tools you use, you will eventually end up staring at the source code of a website. There’s not a single audit I’ve completed during my career where I haven’t been neck deep in the site’s code at some point.

If you know what you’re looking at, and what might seem off, you can nip SEO problems in the bud. On the flip side, if you miss those harmful pieces of code, and leave them unattended, you leave the site in question open to SEO damage. And that’s never a good thing.

What Can You Find in the Source Code?

The more appropriate question is, “What can’t you find?” The more audits I complete, the more code I end up analyzing, and the more I understand the importance of picking up problems that can impact SEO. The right implementation of various tags, scripts, etc. is critically important.

Whenever I present an audit, I always explain the importance of having a clean and crawlable website. And that includes making sure you aren’t throwing the search engine bots for a loop (which happens often).

When presenting some of the problems you can pick up, most clients have no idea that those issues were present on the site. And that includes people on the marketing team, design team, and even the development team (in certain situations). Nobody is perfect, and the wrong code can easily slip through to production.

8 Examples of What You Can Find

Below are eight examples of what you can find in the source code of a website that can impact your SEO efforts. And these little gremlins can cause big SEO problems.

This post won’t cover every coding problem you can find that impacts SEO, but it does cover some of the most common issues you’re likely to encounter while performing technical SEO audits, as well as some next steps and recommendations.

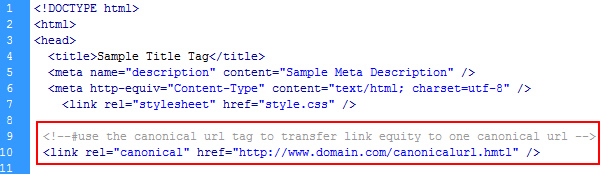

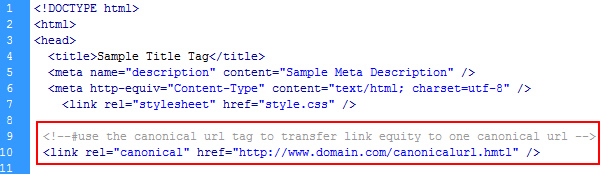

1. Canonical URL Tag Issues

The canonical URL tag can be extremely powerful when trying to deal with duplicate content. It’s a relatively simply tag that can consolidate link equity from across multiple URLs containing the same content.

When the canonical URL tag is implemented incorrectly, the simple and helpful tag can turn evil in a split second. You can check out a previous post of mine that explains how one line of code could destroy your SEO, and one of the cases I covered explained how a broken canonical URL tag yielded catastrophic results for an ecommerce provider.

Recommendation: Only use the canonical URL tag if you fully understand what the end result will be. If you’re unclear about how to best use rel canonical, then don’t implement it at all. The ramifications could be severe.

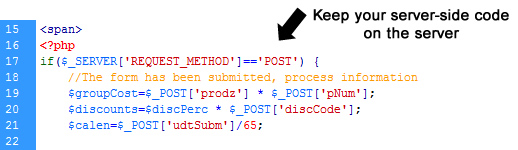

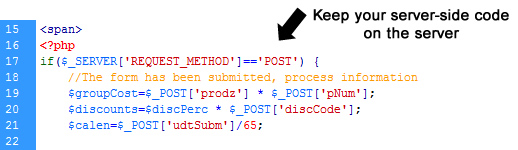

2. Server-Side Code Showing Up Client-Side

When checking the HTML source code, you might come across server-side code like PHP, C#, VB.net, etc. sitting in the HTML. Needless to say, this code should never end up in the HTML source code!

- The code obviously isn’t being processed on the server, so the page is missing the intended content or functionality.

- You could be revealing information to snooping competitors, or worse, hackers.

- Depending on how the code resolves on the page, it could show up for any visitor to see (in plain sight). And that includes Googlebot as it crawls your website.

Recommendation: Make sure your server-side code stays server-side. Ensure your programmers work with your designers and front-end developers to keep their server-side code hidden from users (and working on the page at hand).

3. CSS Manipulation & Hidden Content

During audits, there are times I notice a large amount of HTML content in the source code that doesn’t match up to what is displayed on the page. Unfortunately, there are times that large amount of content never makes it way to the page at all (visibly).

Sometimes this code is benign (mistakenly hidden), and sometimes it’s more sinister (someone trying to stuff a page full of keyword-rich content). If you didn’t review the code, you would have little shot of seeing this content. And this situation could cause problems on several levels SEO-wise.

There are several CSS techniques for hiding content, including moving the content off-page (location-wise), using white on white text, etc. If you pick this up in the source code, then it’s important to present the problem to your client as soon as possible. Let’s face it, either you can tell them about it, or Google can at some point. I’d choose the former over the latter.

Recommendation: Visit your website with JavaScript and CSS disabled. What you find might be enlightening. If you notice anything strange, like boatloads of keyword rich content that you never saw before, dig into your code to find out what’s going on. Get your developers and designers in the room too. Fix the problem quickly.

4. Meta Robots Problems

Similar to the canonical URL tag, the meta robots tag can be both greatly helpful, and incredibly destructive. It seems to be a confusing topic for people outside of SEO, which leads to some strange implementations.

Using the meta robots tag, you can instruct the search engines to not index a certain page, not follow any links on the page, etc. As you can imagine, the wrong directives can lead to catastrophic results.

For example, you might find important pages with “noindex, nofollow” on the page. Wonder why a page isn’t ranking well? You are telling the engines to not index the page!

Recommendation: First, check if you are implementing the meta robots tag. If you are, then your next move is to understand how you’re using it. For example, are you noindexing a bunch of important pages, is the tag malformed, etc? If you find you are using the tag across most of your site (and your site is large), then you can use a tool like Screaming Frog to gather meta robots data in bulk. Then drill into the code on specific pages to double check the implementation.

5. Multiple Head Elements, Title Tags & More

In SEO, the HTML head element contains some important pieces of information. For example, the head contains the title tag, meta description, canonical URL tag, etc. During audits, it’s important to check the various HTML elements located in the head of the document to make sure they are correctly structured and well-optimized.

But what if you find two (or more) of each tag? And to make matters worse, sometimes you might find the extra tags are empty or malformed.

Imagine you spent a lot of time performing keyword research, optimizing title tags, etc. Then you end up presenting two sets of titles to the search engines (and one is completely empty or malformed). Needless to say, you don’t want this happening. The great news is that this is typically a quick fix.

Recommendation: Ensure your HTML head element is in order on each page of your website. There are some incredibly important elements and tags present there, and you want to make sure they show up properly, that they are correctly coded, and optimized.

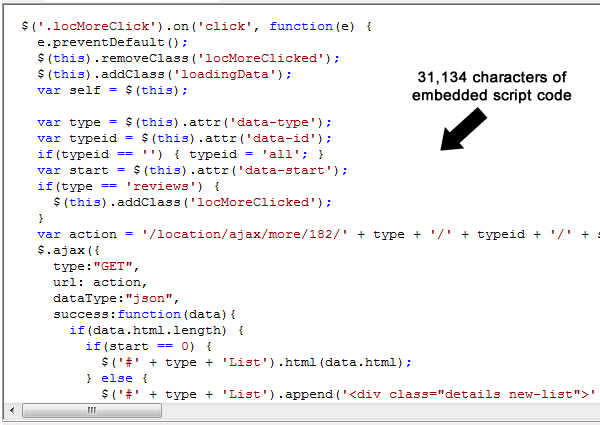

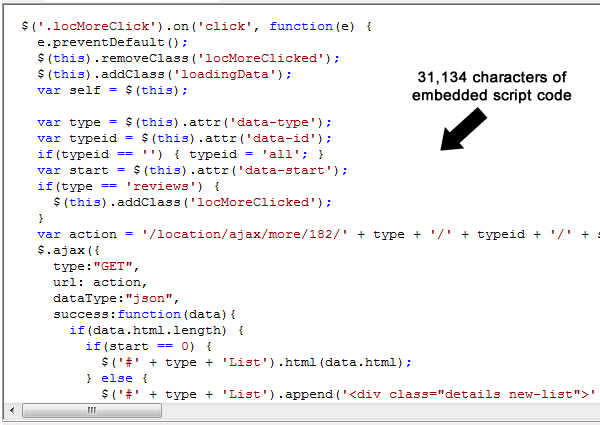

6. Excessive Script Code

Most web pages use JavaScript for one reason or another. When doing so, you can include that code embedded on the page or by separating that code into its own file. The latter is definitely a better choice latency-wise, code-separation-wise, etc.

When checking the source code of a website, you might find excessive amounts of script code embedded on each page. I performed a recent audit where several key pages had over 30,000 characters of embedded script code per page. And some of that code isn’t even used on the site anymore. The extra embedded script code could be slowing down your pages, throwing errors, etc.

Recommendation: Check your page speed and your embedded scripts today. You never know what you’ll find code-wise. Based on what you find, consolidate your scripts, remove unnecessary scripts, and use external script files. Tidy up your client-side code.

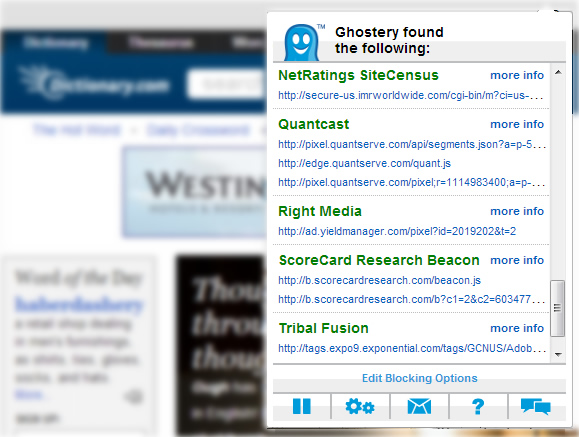

7. Analytics Tagging Problems

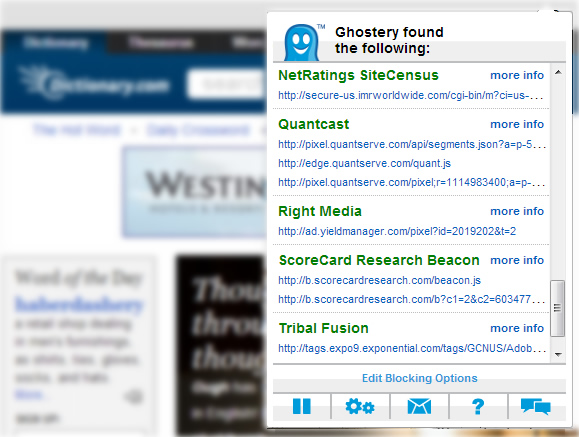

With all of the tracking solutions available today, there’s a good chance you have several analytics snippets included in your webpages. That’s fine, as long as you know which ones are there (and that they are rendering and tracking correctly).

By double-checking your source code, you can not only find which snippets are loading on your pages, but you can find improper tagging. And that improper tagging could be inhibiting your pages from loading correctly, and obviously not tracking properly in your analytics packages.

In addition, you might find rogue tracking snippets on your site. Maybe they were implemented by developers or designers that worked on the site a while ago, by one of your agencies, consultants, etc.

If the snippets are present in your code, then they are tracking your site activity and are available to someone. Don’t let your site reporting get in the hands of external organizations! That data in the wrong hands can put your organization at a serious disadvantage.

Recommendation: Check your source code for analytics snippets today. You can also use a plugin to kick-start your efforts, like Ghostery. Once you determine which tracking snippets are on your site, dig into your code to find them. Then decide what should stay, and what should go.

8. Malformed Anchors and Canonicals

Your internal linking structure is extremely important SEO-wise. When linking to important internal pages, you definitely want to make sure links are coded properly. If not, broken links can lead to a poor user experience while also inhibiting the search engine bots from effectively crawling your site.

In addition, you don’t want to inhibit the flow of PageRank through your site. It’s important to ensure you link from top-level pages to sibling and child pages effectively. For example, a link from an important category page to a product page within that category is important to have in place.

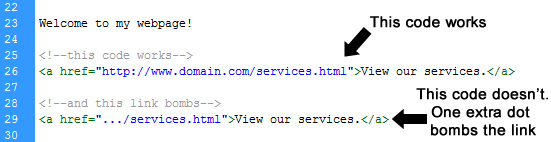

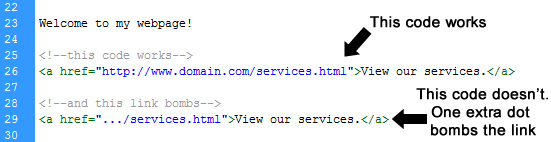

When checking the source code of documents, you might find malformed anchor tags. I find this more often when sites are linking to documents using relative paths versus absolute paths.

Dot notation can easily be entered incorrectly. For example, one extra slash or dot and the link won’t work. And it could be months before you pick up the problem. In a worst-case scenario, the problem is widespread and it’s impacting thousands of links on your site.

This can also happen with the canonical URL tag. If you happen to use the improper format, or the wrong URL, then you can absolutely kill the SEO power for the page at hand. And if it’s widespread, you can kill the SEO power of your entire site. I’m not exaggerating.

Recommendation: Take extra steps to ensure your links are working properly. Be careful when using a relative path, since it’s easy to add extra slashes or dots. As with any code, adding or subtracting even one character can bomb the entire link. And be especially careful with rel=canonical. The wrong URL or a malformed tag could kill your SEO efforts. By the way, checking links and rel=canonical is a great time to leverage tools like Screaming Frog or Xenu. Once you have your crawl report, dig into the source code to find any problems.

Next Steps and Recommendations

It’s critically important to understand what’s in your source code. The site may look pretty, but there could be evil gremlins roaming around your code. If you’re interested in learning more about what lies beneath your pages, then here are some recommendations:

- At a minimum, learn the basics of web development. That includes HTML, JavaScript, and CSS. Understanding these three components will go a long way.

- Learn server-side programming (even basic server-side programming). Whether it’s PHP, ASP.net, etc., understanding how server-side code works, and how webpages are dynamically built, is extremely important. It could save your site one day.

- Understand the core SEO coding elements and how they can impact your website. For example, meta robots, the canonical URL tag, nofollow, authorship markup, etc. Once you do, you can combine your programming skills with SEO best practices coding-wise. It’s a win-win.

- Don’t solely rely upon SEO tools or software. They are meant to be a starting point for your analysis, and not the end-all. If you generate a report from an SEO tool, you still need to understand what it means, and then take action.

Summary – It’s in the Code

Hopefully you now understand the importance of why your source code matters for SEO. When performing SEO audits, you’ll find yourself neck deep in the code at some point. And when you do, it comes down to your knowledge of what’s right, what’s wrong, and what’s really, really wrong. Good luck.

Leave a Reply

You must be logged in to post a comment.