Just in time for the annual security conferences of Black Hat and Defcon in Las Vegas, Nevada, Incapusla Security has released their latest report on the state of Googlebot (and it’s evil malicious twin). The news isn’t good for those of us who spend our days staring at website statistics.

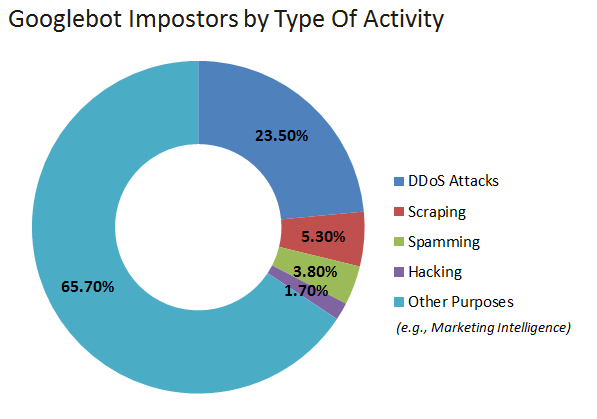

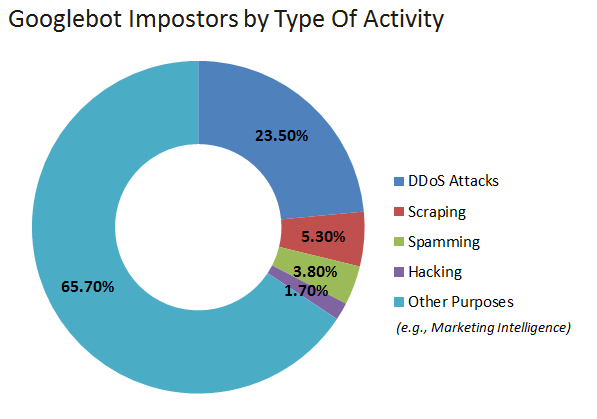

Malicious activity is up 61 percent, and one in every 24 visits is a fake bot. More than 34 percent are used for DDoS attacks, hacking, scraping, spamming, or other malicious activities.

So what does this mean for you?

Methodology

Incapsula studied:

…over 400 million search engine visits to 10,000 sites, resulting in over 2.19 billion page crawls over a 30 day period.

Information about Googlebot impostors (a.k.a., Fake Googlebots) comes from inspection of more than 50 million Googlebot impostor visits, as well as findings from our ‘DDoS Threat Landscape’ report, published earlier this year.

Incapsula’s Findings

When Incapsula looked at the standard Googlebot, there were some interesting discoveries.

First of note is that Googlebot crawls more pages than all other search engines combined at 60.5 percent.

What they found when analyzing these visits was also a bit unexpected.

- Yahoo has dropped out of the top five crawlers or engines.

- Majestic 12 Bot, or Majestic SEO’s bot webcrawler, has taken fourth place.

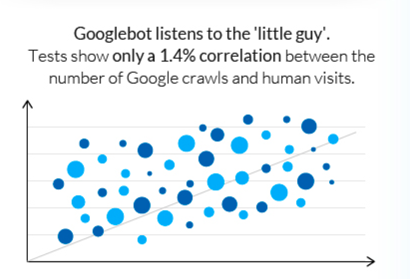

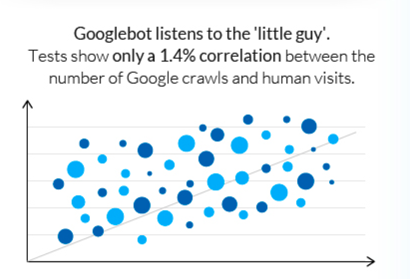

- Google doesn’t play favorites.

- There is virtually no correlation between site size and

- Crawl frequency.

- Crawl rates.

- Crawl depth.

- SEO performance.

We know Google is the largest generator of bot visits to your site, that these visits are triggered by something other than site activity and SEO performance, and that they “listen” to the little guy.

All in all, pretty good. But it isn’t Google we’re troubled by; it’s his “evil” twins we need to be cautious about (there may be many – some of them quite perfectly done).

User-Agents

The way we tell what bots are visiting our sites is to look up the user agent or calling cards, in our log files. When we see the corresponding user agent we know the visits came from what OS, computer type and what browser. For example, a user agent might look like this:

Mozilla/5.0 (Macintosh; Intel Mac OS X 10.9; rv:30.0) Gecko/20100101 Firefox/30.0

This information can tell us someone used Mozilla/Firefox 30 on a MacIntosh with Mavericks OS. It can also tell spiders, programs and bots. It is also how we validate who is running around on our sites.

Impersonating Google – a Bot’s life

In their review, Incapsula found that “over 4% of bots operating with Googlebot’s HTTP(S) user-agent are not who they claim to be.” And the big winner was Brazil, which had a bad Googlebot rate at almost 14 percent.

Bad Bots

Why would anyone care about sending out a fake Googlebot?

Well, it’s sort of like having a fake ID when you’re 18. Sometimes you just want to hang out, most of the time it means something your mother wouldn’t like is going on.

Not All Bots Are Bad

You will want to keep in mind that not all bots are bad bots with nefarious intent. A fake bot doesn’t always have malicious intent. Some are used for just using the Googlebot to view your site as Google would and then putting that data to work in objects like tools.

So before you block a bot, check its behaviors out. Is it simply crawling? Is it sending itself over and over (DOS (Denial of Service attack)), randomized (could be penetration testing for holes to exploit)?

Once you determine that it is a bad bot you will want to block their access. Be careful however you could wind up blocking Google, so make sure what you block is bot specific.

How Do You Know When You’re Getting Faked Visits?

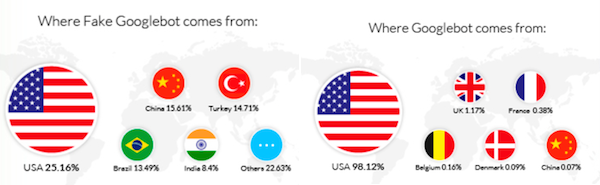

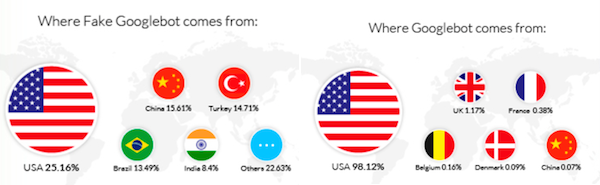

One of the key indicators for most site owners that there are issues afoot are the countries of origin in your Analytics organic traffic.

A normal distribution of the top six countries likely to visit your U.S. site is on the right, the one with fake Googlebot on the left. Now, if you target countries in the left side, it’s perfectly normal for you to have these in your distribution. If not, you’re mostly likely having an issue.

If you see this, make sure to check your server logs and the user agents and see if you have an attack underway. If so, and you don’t have access to the servers, make sure to talk to the company that does, so they can block this for you.

Identifying Bad Bots Isn’t Too Hard

The good news is that mostof the time it isn’t that difficult to identify the bad bot – once that is done, you can block it and keep it off your site.

That is ifyou have the power, rights, and access. Most site owners don’t and must rely on their hosting company, which is why it is so important you pick one that is security readied and knowledgeable.

If you do have access, you can take these steps to verify the bot activity is actually occurring and then use this same information to block them.

How to Identify the Bad Bot?

Identifying bad bots can be tricky; some are very sophisticated, especially the ones mimicking Google. Here are some steps you can take to verify their presence.

Beating the Bot

From Incapsula and their study:

Step 1: Looking at Header Data

In this case, even though the bots were using Google’s own user-agent, the rest of the header data was very “un-Google like”. This was enough to raise the “red flag”, but not to issue a blocking decision because our algorithms account for scenarios where Google deviates from the usual header structure.

Step 2: IP and ASN verification

Next on our checklist was the IP and ASN verification process. Here we looked for a couple of things, including the identity of the owners of the IPs and the ASNs that are producing the now suspicious traffic.

In this case, neither the IPs nor the ASN belonged to Google. So, by cross-verifying this information with the already dubious headers, the system was able to determine – with a high degree of certainty – that it was dealing with potentially dangerous impostors.

Step 3: Behavior Monitoring

However, “potentially dangerous” doesn’t always equal “malicious”. For example, we know that some SEO tools will try to pass themselves off as Googlebot to gain a “Google-like” perspective of a site’s content and link profile.

This is why the next thing we look for is the visitors’ behavior, in an attempt to uncover their intent. Clues to such intent often come from the request itself, as it gets profiled by our WAF. In this case, the sheer rate of visits was enough to complete the picture, immediately pointing toa DDoS attack and raising the automated anti-DDoS defenses.

Step 4: IP Reputation and New Low-Level Signature

Although this wasn’t our first encounter with fake Googlebots, this specific signature variant wasn’t part of our existing database. Even as the attack was being mitigated, our system used the collected data to create a new Low-Level Signature which was then added to our 10M+ pool of signatures and propagated across our network to protect all Incapsula clients.

As a result, the next time these bots will come to visit, they will be immediately blocked. Moreover, the reputation of the attacking IPs was also recorded and added to a different database, where we keep data about all potentially dangerous IPs.

Simply put, you must be aware that user-agents can be fake, IPs can be spoofed, headers can be re-modeled and so on. And so, to provide reliable identification, you need to cross-verify various tell-tale signs to uncover the visitors’ true identity and intentions.

Summary

Keep an eye on your organic traffic, especially countries of origin. When something is afoot, make sure to get in there and deal with your bot’s intentions. Just be careful not to keep the good guys at bay.

Google Analytics has also given users bot and spidering filtering.

Leave a Reply

You must be logged in to post a comment.