Technical SEO – It’s All About the Crawl

If search engines cannot crawl your website or index your pages, all of the content in the world will not make any difference.

If search engines cannot crawl your website or index your pages, all of the content in the world will not make any difference.

For about as long as I’ve been in SEO (12+ years), I’ve witnessed many, many people laying claim to “doing SEO”. From designers, to content marketers, to PPC folks, to PR folks, to social media folks. Everyone wants to be in the game.

That’s all well and good…these folks do have their place.

But, with SEO, it all begins with “the crawl”.

If search engines cannot crawl your website or index your pages, all of the content in the world isn’t going to move the needle much. You can even generate huge numbers of great backlinks, and you’re still going to be stuck in the mud.

Some websites are easy enough to get crawled. I mean, if your website is generally “static”, and built in a simple manner (WordPress with a few plugins?), you’re unlikely to experience any issues. However, there are still many instances where websites have a challenge in getting optimal indexation.

Recently, my company brought on a new client who – by all appearances, and our initial analysis – was impacted by Google Panda WAY BACK in February, 2011/. The evidence seemed pretty clear:

For years, my client determined to move forward with “business as usual”, not giving SEO much thought or consideration. They were still making money through marketing themselves via PPC, email and other more traditional means.

Then they determined that it was about time to try to catch up with everyone else and devote some time, money and, yes, patience to the process of recovering their organic search presence.

This particular website is e-commerce and happens to be like many others who re-sell products; all of their product descriptions are shared amongst many others who also re-sell these identical products. “Easy enough”, we thought… we’ll rewrite a bunch of product descriptions, and then get on with the work of optimizing the results.

Not so fast.

As I’m sure many of you do, we kicked off the initiative with an “audit”. We wanted to take a holistic view of everything, in order to ensure a strategic approach to the effort. That was until we started to uncover a seemingly endless list of technical hiccups, missteps and redirections (literally) that led us to forgo the “typical process” and get much deeper into crawlability/indexability.

“Put first things first”, as they say.

Using the Wayback Machine, we were able to look back at the website, from December 2010 to February 2011 (analytics profile had been lost from this time period; developers who had worked on the website back then were no longer available for reference). This is what started our process of learning just what we had gotten ourselves into. Some “genius” decided that they should rewrite all of their URLs (insert a folder, for no apparent reason), stop using search engine friendly URL structure (ie: companyname.com/category/product/product-name) and then 302 redirect everything to these new URLs. That alone was/is bad. Then came a series of knee-jerk reaction to the losses that led to the implementation of just about every bad practice known to SEO.

We quickly re-wrote the copy that was promised, and threw away the “game plan”, to get down and dirty on some technical SEO.

First things first, we needed to see if bots could adequately crawl the website.

Google Webmaster Tools: While Google Webmaster Tools was in place, the Sitemaps were outdated. Rather than simply re-submit Sitemaps, we generated new Sitemaps, categorized by section of the website that we wanted to analyze (Main, Blog, XYZ Products, ABC Products, etc.). This is perhaps the best thing that we did. It helped us to isolate the areas of the website that was not getting indexed, fully.

Log File Analysis: We had suspicions that scrapers were actively targeting our client’s website. A log-file analysis confirmed this. We were able to isolate some IPs and block them from crawling the website. We also wanted to look for any tell-tale signs that bots were having difficulty crawling the website.

Content Analysis: Because we still believed that Panda could be in play, and we were able to identify many other websites with duplicate (stolen) content, we still needed to look for any instances where we may have created duplication within our own website. While not readily visible in many tools/crawls, by viewing cached versions of pages which we had determined were problematic, we were able to see that our client had “pop up” content indexed. This pop-up content was important to the user (would they know if a product was out-of-stock, etc.) but this content also existed on every product page, whether or not the product was out-of-stock, or not. It was in Google’s cache. As a percentage, this “junk” content represented a large number. Perhaps half of their text content on their pages was related to a content being out-of-stock, or otherwise “not available”. You think the search engines like to read that? You think they’ll want to index those pages? Our thoughts? “Probably not”.

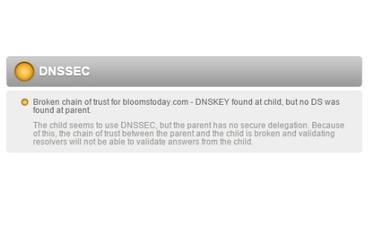

DNS Issues: As we dug deep into possible technical issues, we wanted to understand if there were any DNS issues for the domain. We wanted to ensure that there were no issues with the way the domain was set up as well as any roadblocks that may be present when the site is “called up” and the server must begin communication. We did find a slight issue at the DNSSEC level where delegation was lacking communication could not be validated. This was corrected.

Security of the Website/Cross-Site Scripting (XSS)/: Conducting some site queries in the search engines, we stumbled into an instance where we clicked through to the client’s website and received a message that the website “cannot be trusted”. We decided that we needed to check the website for any malware. For this, we used Zed Attack Proxy. As it turned out, the client’s website was – in fact – returning a “positive” (which really means “negative”) result to our test. And, as it turns out, it wasn’t really XSS but rather a “false/positive” result due to the way that their software performed various tasks in the backend. Still, though…if a tool is showing issues, why wouldn’t a search engine think that there are issues?

Mobile: There wasn’t a quick fix here, as the client is utilizing a mobile application and not (yet) running a responsive website. This is coming soon, but we are working with their mobile application to at least get some one-to-one mapping for all pages (currently, all pages in mobile will direct you to the home page, as they have a “wizard” application to push you through the sales process).

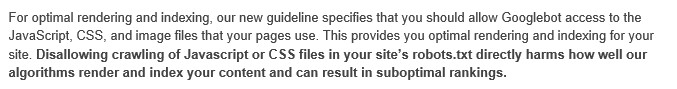

JavaScript / CSS: The client was blocking JS and CSS from the bots. As Google announced last year, this is not a good thing.

URL Structures: This client had been using a somewhat common method for URL generation, and one which has historically worked, just fine (arguably). That is, place all product pages directly off of the root of the domain (companyname.com/product-name). I’ve always held the belief that this does, in fact work but it shouldn’t. Search engines should be smarter than this. With recent changes in the mobile algorithm, I am now a strong supporter of URL structures which follow the structure of the website (and breadcrumbs which accompany this). We have now rewritten all URLs to a proper format, and added Category pages where there had been none, previously.

We have made so many changes (and things are still not where they ultimately need to be) that we can now take a step back and begin to consider the more Strategic approach that had been planned for, long ago. We can now consider things like content-gap analysis, Information Architecture enhancements, a redesign of the website, social media strategy, PR and usability/conversion rate optimization. All of those things are certainly important, but it all begins with the crawl.

Leave a Reply

You must be logged in to post a comment.