CTR as a ranking factor: what we know so far and how to action it

For the last two years there has been much discussion and debate around whether click through rate (CTR) is something Google uses to determine where to rank a site.

It all began way back when Rand Fishkin did an interesting experiment which provided some evidence behind his theory that CTR is in fact something that could influence rankings.

This theory makes sense; why wouldn’t Google be looking at user based signals to improve search results?

Just as we do user testing on our websites to increase the number of conversions, Google will surely want to be looking at user signals to ensure they are fulfilling their goal of ‘organising the world’s information’ in the correct order in SERPs.

However, many times when Google, and more specifically Gary Illyses, has been questioned on this we have received the response of the opposite. That Google does not use CTR as a ranking factor due to it being a ‘noisy signal’ and something that could be gamed by spammers.

See this response from Gary Illyses in an interesting interview with Kwasi Studios:

We also received the same response from Gary when he mentioned the topic in June 2015 at SMX Advanced.

At the event Gary did, however, say CTR is being used for personalisation, meaning search results change if you frequently click on the same site. This can be especially useful for ambiguous terms.

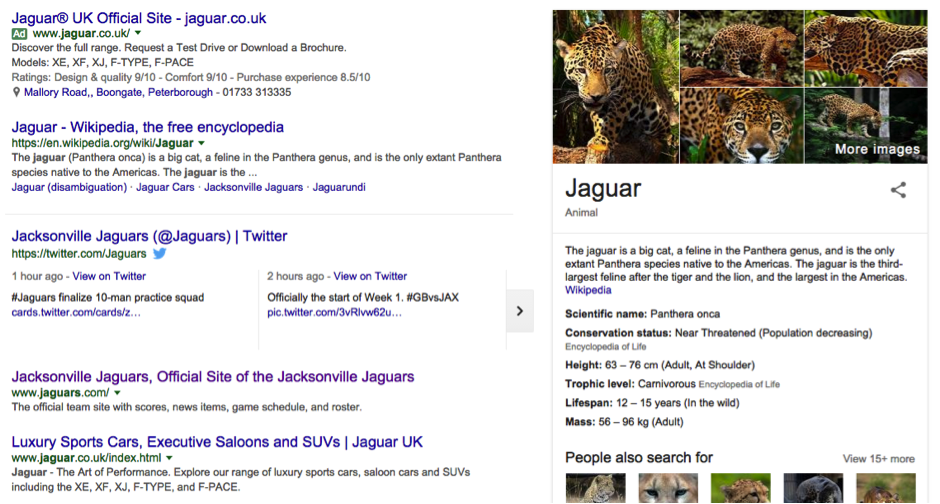

For example, if a user searched for ‘Jaguars’ a ‘Query Deserves Diversity’ (QDD) algorithm will take effect and display results for the car brand, the Jacksonville Jaguars as well as the animal.

If the user then clicks on the car brand and does that frequently, Google will be more likely to show the Jaguar car brand due to the high CTR for the Jaguar site. Other than that, Gary says click data is not being used.

Due to this negative feedback received from Google around the topic, some have dismissed CTR as something that Google does not make use of.

However, conversely we have had other statements from Google that specify they do in fact use CTR in some circumstances.

The primary evidence we have from Google that CTR is being used for more than just personalisation is in this excellent presentation by Paul Haahr titled ‘How Google Works: A Ranking Engineer’s Perspective’.

If you have not watched the presentation yet, I highly recommend it! You can find a video of it here.

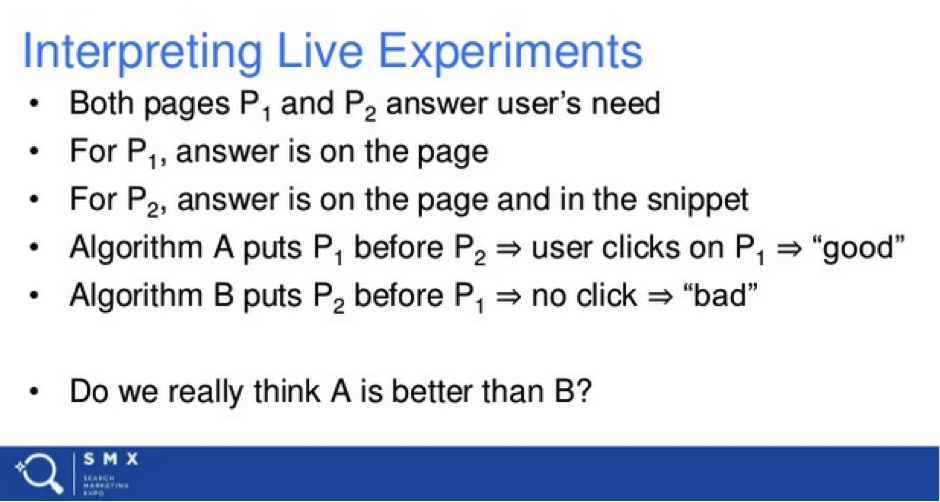

From this video, we can gather Google is using click through rate, but not to adjust rankings directly. They are instead using it indirectly in controlled situations to validate the quality of search results.

They are also using it to verify that an algorithm change has the desired results. ‘Controlled situations’ means they are taking a portion of search results, testing changes and using CTR as a metric to measure if the changes had the desired impact on improving user engagement.

Following what Google has said regarding CTR, this means that it is not being used in a way to directly change search results. It is instead being used to test whether changes to direct ranking signals such as content and links are improving user engagement and the quality of search results.

This is explained in this slide on Paul Haahr’s presentation:

As explained in the presentation, CTR is only one of the ways Google is testing search results are showing up in the correct order.

They are also taking a more manual approach with human rater experiments. This is where Google will show an actual person a search result that has an experimental algorithm change on it, and they get them to score the page based on whether the needs have been met (does the page fulfil user intent), and the quality of the page.

What this means is gaming the system explained here by artificially increasing CTR becomes virtually impossible, as along with live experiments, there is a manual review of pages before algorithm changes take place.

I am sure CTR and human rater experiments are only some of the ways Google will be testing algorithm changes.

While this was never mentioned as a user engagement metric in the presentation, there is a high chance they are also paying attention to pogo sticking and dwell time. If this is true, it makes metrics such as average time on site and bounce rate important things to consider.

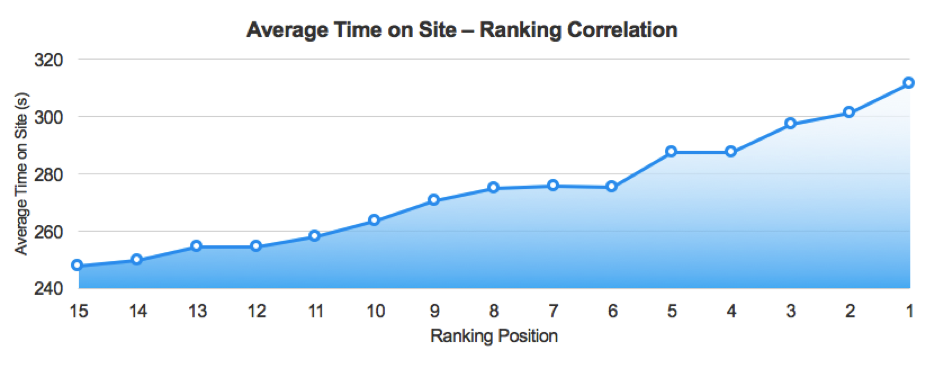

Here is a bit of data sourced from our in-house ‘Roadmap’ technology that looks at over 160 potential ranking factors and shows correlations in search performance.

And yes, I know correlation does not mean causation, but it can give us a good indication what Google is taking into consideration when ranking sites. Here is how average time on site correlates:

Source: Stickyeyes Roadmap

Of course, this does not mean Google is directly creating algorithms that change rankings based on things such as average time on site.

It does, however, inform us that Google is favouring sites that searchers stay on.

This metric could be used in a similar way to how Paul Haahr explains Google uses CTR, where they will adjust traditional signals such as content and links depending on whether the user stays on the site or not. Again, this is all speculation with a bit of data to back it up.

Despite what we know is 100% correct, as Google has confirmed it, we still have Rand Fishkin’s CTR experiments that say Google is not telling us everything they use click data for.

This is where we have to speculate based on the information the SEO community has managed to get out of Google.

Thankfully some insight into this was given by Googler Andrey Lipattsev in a Q&A show back in March 2016 when Rand questioned him on it. Here is Andrey’s response to Rand asking why exactly that happened:

“Andrey: It’s hard to judge immediately, without actually looking at the data in front of me. But, in my opinion, my best guess here would be the general interest that you generate around that subject, and you generate exactly the sort of signals that we are looking out for, mentions and links and social tweets and social mentions, which are basically more links to the page, more mentions of this context. I suppose it throws us off for a while until we’re able to establish that none of that is relevant to the user intent, I suppose.”

From Andrey’s response, I believe he is explaining part of Google’s algorithm that is a temporary ranking factor that identifies hot topics or searches and then adjusts rankings accordingly depending on social signals, and possibly user behaviour.

We already know Google tries to push rankings up for fresh content, and this algorithm seems similar to the freshness algorithm (announced way back in 2011).

When this ranking change occurs, it is more than likely that Google applies the technology they have to combat click fraud in Adwords. I believe this experiment that came to the result the CTR was not a ranking factor by using bot traffic backs this up.

From all of the above we can summarise what we know into the following things:

It is evident user engagement and sending users to the correct website is important to Google, because of this we also know optimising CTR is something we should be doing.

The rest of the post will be about how to identifying click through rate opportunities, and then how to improve it.

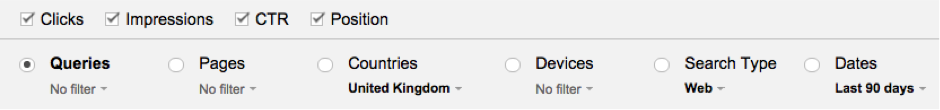

The only reliable data we have on CTR comes from Google itself in the Search Console search analytics report.

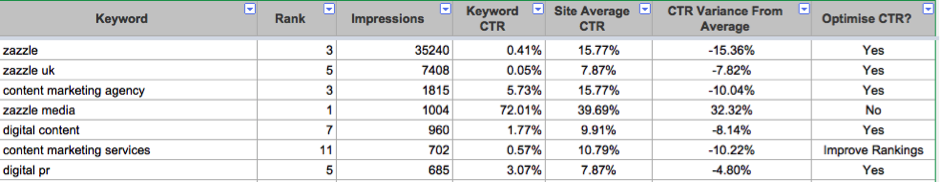

To help with identifying these terms I have made this CTR Opportunity Analysis template Google sheet. This sheet finds out what the average CTR is for keywords ranking position 1 – 100 from a GSC export, and then compares each keyword against your site’s average CTR for that position.

This allows you to identify if there is a significant opportunity to improve click-through for a particular query. You can see whether there is an opportunity as it will tell you in the ‘Optimise CTR’ column.

If you rank higher than position ten it will simply say ‘Improve Rankings’. If you have your own data on average CTR for it each position, there is a hidden sheet called ‘CTR Ref’ where you can fill this information in. You could also replace it with data from this Moz CTR study.

To find queries that that have a low click through rate do the following:

By now, we have the keywords and pages that we need to optimise for. We just need to go through the process of actually improving our pages. Here are some different things you can do to improve CTR:

Once you have done any or all of the above, check how organic traffic changes. Then go back and retest something different. You can rinse and repeat this process until you are seeing diminishing returns from improving CTR for a page.

Overall we can say that CTR is something that is being used by Google one way or another. Rand seems to have uncovered ways in which it is used directly and temporarily to modify search results.

Paul Haahr from Google has informed us it is used indirectly to measure the quality of search results. Because of the above, and because it can simply increase organic traffic, CTR is something you should be taking into consideration when optimising your site.

Along with focusing more on click through rate, SEOs need to become more aware that while getting a site technically fit for purpose, writing great content, and building quality links is important.

We should also spend more time thinking about user engagement and UX, as they are both becoming a more and more important aspect to consider when trying to perform well in search results.

Let me know how the CTR Opportunity Analysis template works for you, and any thoughts you have on CTR or user engagement as a ranking factor in the comments!

Sam Underwood is a Search and Data Executive at Zazzle Media and a contributor to SEW.

Leave a Reply

You must be logged in to post a comment.