A guide to testing your paid social ads to enhance ROI

While display and paid search ads have their place in every digital marketing strategy, social media advertising is often an overlooked paid channel.

While display and paid search ads have their place in every digital marketing strategy, social media advertising is often an overlooked paid channel.

While display and paid search ads have their place in every digital marketing strategy, social media advertising is often an overlooked paid channel.

Paid search is great at capturing user intent, but is a poor channel for proactive targeting customers, feeding the top of the funnel

before the user has the purchasing intent. With click through rates from Facebook ads more than eight times better than paid search, there is definitely an opportunity to move some of your advertising spend onto social but it is important to utilise ad testing in order to maximise your ROI.

This post is split into three sections; the theory behind advert testing; the specific elements which can be tested along with which ones deliver the greatest benefit and; a useful list of actionable insights we’ve developed from personal experience.

We’ll focus mainly on Facebook as it is the by far the biggest platform, and it provides the most elements which can be tested.

Split testing is the main competence utilised in paid social advertising which allows you to measure the ROI from your campaigns.

Split testing compares two versions of an ad or campaign, with only a single element changed between them. This allows you to measure the performance of the opposing elements and keep the one which is working.

To properly determine a winning element, it needs to achieve statistical significance i.e. the probability that the relationship between variables is caused by something other than random chance and will be repeated.

This is an important concept to understand. If we flip a coin twice and it lands on heads both, we cannot assume that the likelihood of heads being 100%, as the sample size is too small; we know that the odds of this happening is 1 in 4. At which point are we confident with the result we get? What’s the probability of getting no tails should the coin be fair?

In this example it is at 25%, when significance is normally assumed at 5% or less, so we can see that this is not a significant test, we would need 5 consecutive heads in order to prove significance because only 3% of experiments on a fair coin will yield the results we see.

Statistical significance is complicated to work out when sampling size varies between testing pots, so I would just go ahead and use this tool to make it easier.

Now we have the theory, let’s expand a bit on the actual elements we should be testing on social media platforms.

There are a large number of elements which can and should be tested; imagery, titles, copy, ad type, bidding, target audience, etc. If we want to test three images with three titles, three different bits of copy with three different ad types that’s already 81 separate adverts which need to be created (3 to the power of 4).

You will also need to pump enough budget into each advert to achieve statistical significance. We would recommend starting small with only a few tests at once.

The below list is in ascending order by impact to ROI of change:

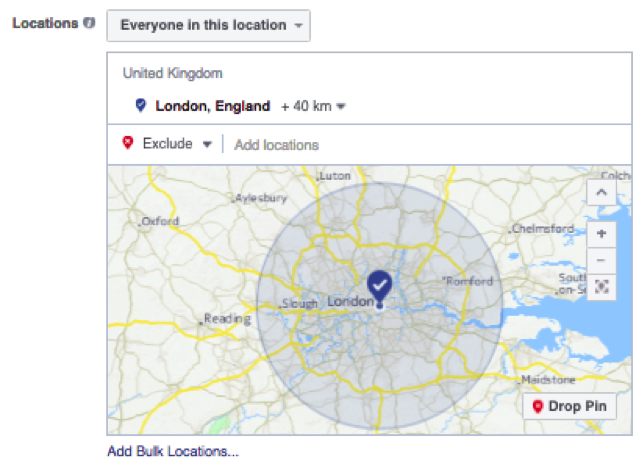

Use common sense to exclude unnecessary elements. If your service area is limited to a certain town, or your product is only suitable for women, you can exclude locations and genders immediately.

It is also worth noting here that Facebook provides you with a report on the location, gender and ad placement so it is probably worth skipping these over with your first test, you can then rule out some of these in further tests.

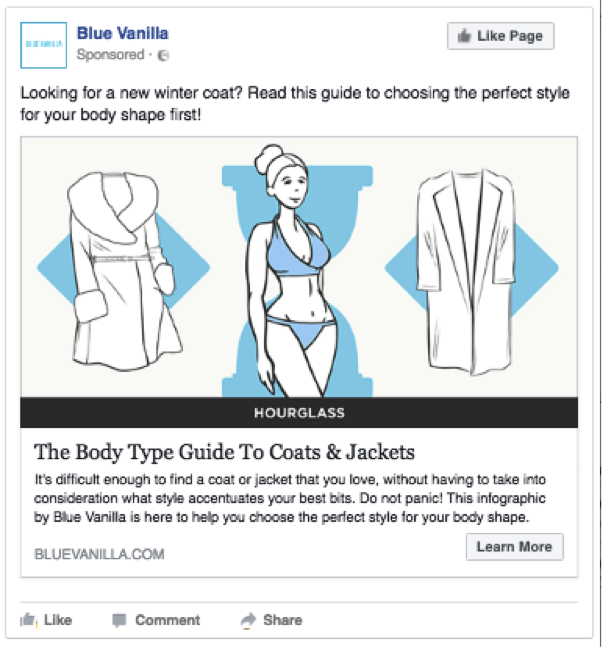

With initial tests you shouldn’t be focusing on subtle differences such as capitalisation or the colour of the background of an image. Test big differences. Use a photograph compared to a cartoon image. Use a personal headline compared to a click bait one.

Don’t go all in allocating a 50/50 split of your total budget into your first test. For every winning budget there has to be a loser, and you will be losing money every time you commit to a test. This is necessary but the alternative is worse; would you rather waste 50% of your budget or 100%?

Be conservative with your budget, set aside a smaller piece to begin to test with. Once you have a winning advert you need to allocate your resources into it, but don’t forget to save some of the budget for further testing.

This step is incredibly important and should be completed before you even begin a single advert. Create a number of different customer personas based upon your expectations.

You will then be able to feed this information into the design and targeting of your adverts. Your tests will then be able to feed back into your initial assumptions to create a more realistic persona.

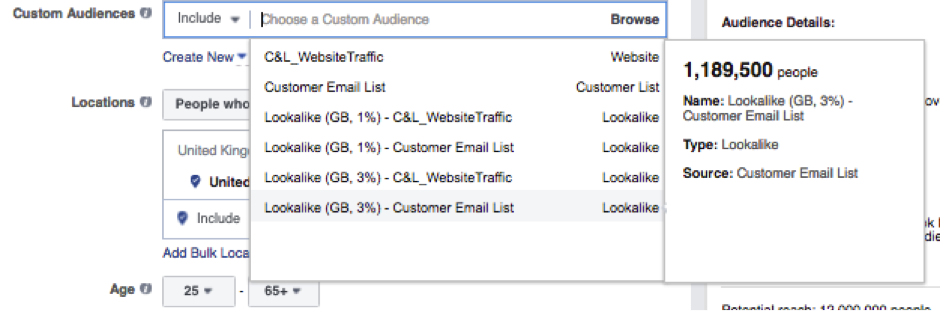

When testing different audiences, such as a custom audience compared with an interest group, it is possible that individual users may land in both groups. This doesn’t make a fair test as any affected users will be exposed to both sets of adverts so will not make an unaffected decision.

Employing negative audiences eliminates this issue so make sure you exclude all other audience groups from your test pot to keep your test legitimate.

This can’t be emphasised enough. All split testing will ever achieve is telling you which element is better than its alternatives. You can never have the best advert possible from a single test of a single element. Keep testing.

Nothing kills an experiment like forgetting to mark up your variations with separate tracking codes. You’ve just lost all insight and will have to repeat your test. Mark up each individual advert with a separate tracking code to properly record each variation in your analytics platform.

You don’t have to jump straight into paid advertising. Utilise your current audience to beta test imagery and copy. You can’t accurately split tests as there will be a number of additional elements which cannot be excluded i.e. time of day, day itself or the week when you post your variations. But this is a free way of getting some insight.

Once your test reaches statistical significance you must turn it off. If there is a clear winner, you are just wasting budget on the losing advert. Statistical significances function is to give you confidence in your results – use the insight or continue to waste money which could be spent elsewhere.

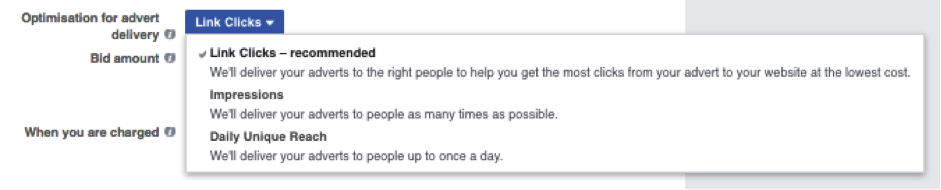

Whilst constantly tweaking your headline or interest groups to eek out that extra few clicks for your money, it is important to keep your main objective in mind at all times. If your goal is conversions, then perhaps focusing on your CTR is not ideal.

Click bait may lead to a surge in traffic, but also an increased bounce rate. Don’t mislead the user, make it as clear as possible the outcome you want from them before they click.

Now, there has been a lot of information contained within this blog post. So if you only take one thing away it should really be the methodology involved in testing.

Make an assumption on how you think your element will affect the outcome – then test it and refine your assumption accordingly. Always look at your adverts as attempting to prove a premise. Never rest on your assumptions; keep testing and always refine.

Tom Smith is a Search and Data Consultant at Zazzle Media and a contributor to SEW.

Leave a Reply

You must be logged in to post a comment.