Imagine playing a team sport, where your team could be penalized at any time, but you and your teammates have only a rudimentary idea of what may or may not trigger a penalty or even whether both teams were being held to the same standards. Sound intriguing?

If that appeals to you, you may be an SEO professional. Or an anarchist. Maybe both.

Moz recently published the full results of their 2013 Search Engine Ranking Factors survey. SEW previously reported on the initial findings, but I decided to take a look to see if I’d find anything of substance. I thought if nothing else, the input of more than 120 SEO professionals and a scientific approach should be a good starting point.

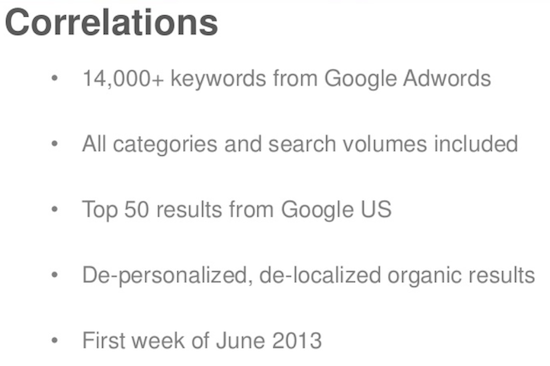

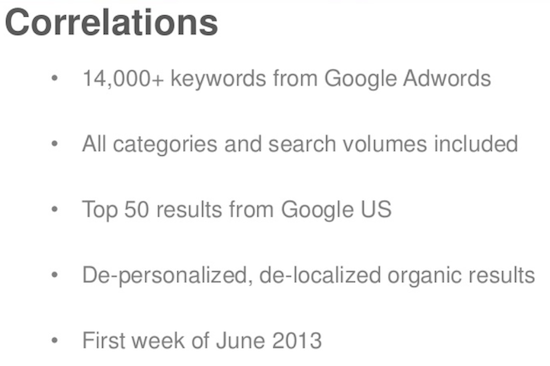

The report was apparently compiled in two phases: a survey of 129 SEOs, soliciting their impressions and opinions on the importance of various factors and signals, as well as a scientific correlation analysis of over 14,000 keywords.

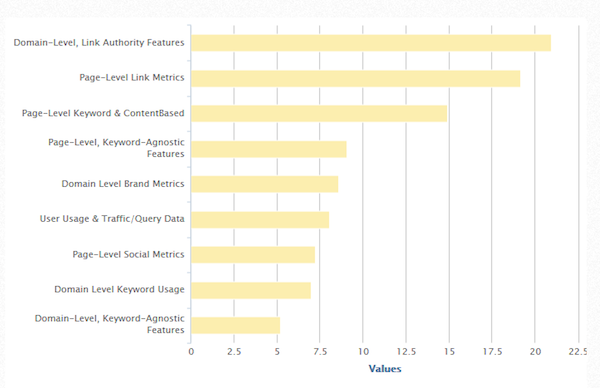

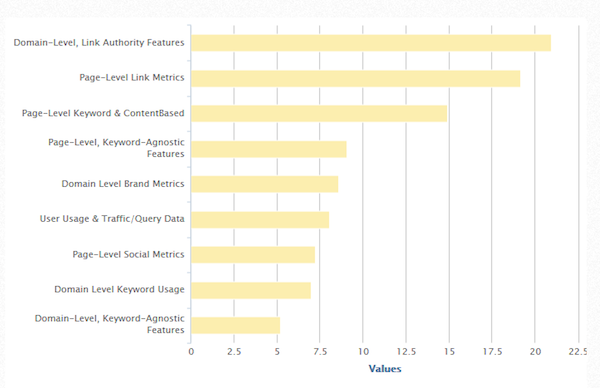

Here’s what the respondents to the survey had to say:

Ranking Factors or Correlation Estimates?

Before we get into the details, let me just say that the first problem I have with this report is that it’s poorly named. Calling it a search engine ranking factors report might lead some to believe it details search engine ranking factors. Duh! Wrong!

The report undoubtedly skirts a number of actual ranking factors (and signals), but where it does, it’s pure coincidence. What this report describes would be more aptly named the Moz Search Correlations Report.

Now to be fair, the report, and every mention of it, is quite clear on the fact that what it calls out is simply correlation. They aren’t presenting it as a listing of actual search engine ranking factors. But the name is misleading. Terribly so.

Moz CEO and founder Rand Fishkin, like the rest of us, has surely noticed that the reading comprehension skills demonstrated by a lot of netizens is less than exemplary. If anything sticks in folks’ minds, it’ll be the title. Calling it something it isn’t just puts more people at risk, in my opinion. While not technically misleading, it gives the wrong impression.

Matt Peters is the math wizard behind the report. You don’t get a PhD in applied math just by showing up for a lot of lectures. You have to be capable of the sort of thought processes that would make most of our brains melt. Normally, I’d probably crack some math geek jokes, but frankly, I’m impressed. So kudos to you, Dr. Peters… a nice piece of work!

I also want to commend Fishkin and his team for once again making their findings amazingly transparent. All of the charts provided by Moz.com are embeddable and you can even download the raw data if you care to try your own analysis.

Here are the basics of the correlation phase of the study:

How Close is the Report to Reality?

This is a tough question, because outside of Google, nobody really knows. But from what I saw, it certainly seems to be a rational approach to estimating the correlation between characteristics and effects.

Odds are that it’s more accurate in some areas than in others, but that’s the nature of our business. We make our observations, form a hypothesis, and step gingerly out onto the ice.

There’s no way of being truly certain that even the most prominent aspects of this report are causative factors. In fact, I think it’s quite likely that many aren’t factors at all, but merely signals (Yes, Virginia, there’s a difference).

If you’re interested in the methodology they used, by all means, check it out here. I encourage you to do so. I think it’ll give you some appreciation of the lengths they went to in an effort to provide results that were as meaningful as possible. You can also see a list of the contributors who participated in the survey.

I’ve always been a believer in the value of critical thinking. You decide which information might be useful, try to gather and isolate data, determine which data is the most reliable and valuable and finally, form a plan for exploiting that data. As Cyrus Shepard cited in his recent write-up on Moz, Edward Tufte called it right when he said:

“Correlation is not causation but it sure is a hint.”

In this case, scientific analysis is certainly the best way to fine-tune your critical thinking. A good argument could be made that it’s the only viable way. So let’s take a look at what the team at Moz put together and try to break it down.

The Components

Moz has developed some metrics that play an integral part in their analyses: MozRank, MozTrust, Domain Authority, and Page Authority. These are all determined on a logarithmic scale, which basically means that as one climbs higher on the scale, increased rankings become more difficult to achieve. Progressing from two to three is much easier than moving from seven to eight.

I know a lot of people complain that it’s presumptuous of Moz to establish their own metrics, but for a couple of reasons, I think it made sense.

- Having some sort of rank as a scale is useful, and when you come right down to it, others could have as easily created their own. Some, in fact, did, although I (probably wisely) refrained from launching SheldonRank. Moz has the ability to amass an adequate data set, the talent to develop tools that need to use some such metric and the wherewithal to put it to widespread use.

- Nearly any metric, when applied equally against all data, can have the effect of greatly simplifying an analysis, without compromising the data. At the very least, trends can be detected, even if the metrics are skewed. In this case, I think the metrics are about as solid as we can realistically hope for.

I’ve heard some say that Toolbar PageRank would have been a better choice than MozRank. That, however, makes no sense to me. Even if it were still being updated, with no concrete idea of what factors play a part in its assignment, it wouldn’t be a viable value, simply because of its unknown makeup and constantly shifting value. MozRank can at least be known not to vary during the analysis of an entire data set.

So, for my part, I understand and accept MozRank, MozTrust, Domain Authority, and Page Authority as useful components in this report. Something was needed that could be uniformly applied against all entities being analyzed. They do the job.

I’d say that the list of nearly 90 different factors that were looked at is comprehensive. I was pleased to see that they added structured data markup to their analysis.

The only oddity I noticed is that while there’s a factor for schema.org, there doesn’t seem to be any recognition of any other markups like RDFa called out. Whether other formats were considered is unclear, but I would think that since Google, Bing, and Yahoo all recognize RDF markup, it should be included, even if bundled with schema.org. It would be helpful to have some clarity on this.

There were a few factors that I might question the need for, but again, since they’re being applied universally, there’s no harm and they may bring additional value to the findings.

The Findings

While I didn’t even try to evaluate the actual calculations used, from looking at the downloadable spreadsheet, it would seem that the final calculations may have been the least laborious part of the process. Their decision to use Spearman correlation also makes sense to me.

At the end of the day, I think this report was put together in as solid a fashion as could be hoped for, under the circumstances. I think the sampling was sufficiently large to give us a fair representation. A broad spectrum was utilized, which does tend to skew the findings a bit. But we all know that every vertical and location, along with a slew of other variables, can cause deviation. In my opinion, they addressed this reasonably well.

I would never suggest that anyone take this data as the basis for a roadmap in planning their campaign and neither has anyone at Moz. It’s simply what it states: a report on detected correlations.

The relative importance of one factor over another could vary significantly in your niche. But it serves beautifully in one regard: it makes us think. Used prudently, it offers value.

My recommendation would be to go through their charts and see what was measured and how, then spend some time with their awesome interactive Search Engine Correlation Data chart. Play around with it, sorting the results by the 10 categories at the top of that chart, and compare it to your own impressions of how things are working for you.

Closing

I began this exercise fully expecting to find another of those half-baked, pseudo-scientific studies that are far too common in our line of work. Had that been the case, this would have been heavily snark-ridden. But the truth is, I think this report provides exactly what it claims, in as accurate a fashion as is reasonably possible.

Some of the findings in the correlation study surprise me quite a lot, making me take a very critical look at the methodology. At the end of the day, it’s still just correlation – a number of other factors could have caused the result. But as Tufte said, it’s a hint, so I think it raises some possibilities that are worth examining.

My opinion: Like any correlation study, take the findings with a grain of salt. But all in all, I think the Moz report is worthy of consideration.

Except for the title. That needs to be changed.